If you’ve worked in software testing or you’re just starting to learn what QA (Quality Assurance) even means, you’ve probably noticed something: the conversation around testing has completely changed in the past couple of years.

And honestly? It changed fast.

Not too long ago, manual testing in software testing was the gold standard. A team of skilled testers would sit down, open an application, click through every screen, fill every form, tap every button, and carefully document what broke and what didn’t. It was meticulous, it was human, and it was slow, but it worked. Teams trusted it.

Then came test automation. Scripts replaced some of the repetitive clicking. CI/CD pipelines ran regression suites overnight. Life got a little faster. But even then, automation tools still needed humans to write the scripts, maintain them, and figure out what to test in the first place.

Now, enter AI agents.

And no, this isn’t just another tech buzzword cycle. This is something genuinely different. AI agents in software testing aren’t just automating tasks; they’re beginning to think through testing workflows independently. They generate test cases, predict where bugs will occur, run tests at scale, heal broken scripts automatically, and report results in plain English. The pace of change is dizzying, and QA professionals everywhere are asking the same question:

“Is AI going to take my job?”

Here’s the honest answer: not entirely. But it is going to change what your job looks like dramatically. The testing teams that thrive in the next five years won’t be the ones who ignored AI. They’ll be the ones who figured out how to work with it.

This blog is your front-row seat to that transformation. We’ll break down what AI agents do in a testing environment, which parts of manual software testing they’re changing (and which parts they can’t touch), what the best tools look like right now, and how businesses are already winning by embracing this shift.

Whether you’re a seasoned QA engineer, a software development leader, or someone just trying to understand where the industry is heading, this is for you. Buckle up. The future of software testing is moving faster than most people expected.

What is Manual Testing in Software Testing and Why It Mattered for Decades?

Before we talk about what AI agents are replacing, we need to understand what we’re talking about when we say, “manual testing in software testing.”

Manual testing is exactly what it sounds like: a human tester interacts with a software application without the help of automation tools, evaluating whether the software behaves as expected. The tester reads requirements, designs test cases, executes them one by one, logs bugs, and reports findings. It’s a hands-on, brain-forward process that relies heavily on human judgment.

For decades, manual software testing was the only real option. When software applications were simpler, development cycles were longer, and deployment happened quarterly (if not annually), having a dedicated QA team that tested everything by hand made perfect sense. Teams had time. They had cycles. Manual testing caught real bugs in real ways; testers discovered issues that no script would have ever thought to look for.

And manual testing has some genuinely powerful strengths that automated tools have always struggled to replicate:

- Exploratory Testing: A manual tester can wander through an application the way a curious user would, clicking things in unexpected orders, entering weird data, and asking, “What happens if I do this?” That unpredictability is a feature, not a bug. It uncovers issues that scripted tests miss entirely.

- Usability and UX Evaluation: Whether an app “feels right” is something a human knows immediately. Is the button placement confusing? Does the flow make sense? Is the error message clear? No AI can judge subjective user experience the way a real person can.

- Domain Knowledge Application: In industries like healthcare, finance, or legal tech, testers who understand the business context can identify compliance risks, logical contradictions, and real-world edge cases that automated tools would never catch.

- Ad Hoc and Regression Testing in Complex Systems: When a system’s logic is deeply interconnected, and documentation is incomplete, experienced manual testers rely on intuition and experience, skills that only develop over years of working in the domain.

The world of software has changed enormously. Applications are now cloud-based, updated multiple times per day, expected to work flawlessly across hundreds of device configurations, and tested under the pressure of continuous delivery pipelines. Manual software testing services, while still essential, simply can’t scale at the speed modern software demands.

What are AI Agents and How Do They Work In Software Testing?

The term “AI agents” gets used a lot, but what does it mean in the context of software testing?

An AI agent is a software system that can perceive its environment, make decisions, and take actions to achieve a specific goal, often without step-by-step human instruction. Unlike traditional automation tools (which follow fixed scripts), AI agents are adaptive.

They learn from past behaviour, adjust their strategies, and can even generate entirely new testing approaches on the fly.

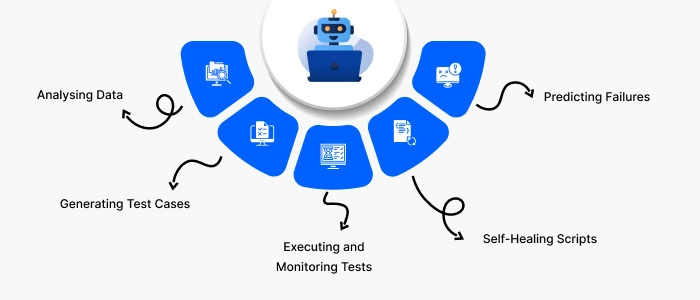

In software testing, AI agents typically work by:

- Analysing Data: They process historical test results, bug reports, code changes, and user behaviour data to identify patterns. Which features tend to break most often? Which user flows carry the highest risk? AI agents answer these questions at a scale no human team could manage manually.

- Generating Test Cases: Using natural language processing (NLP) and machine learning (ML), AI agents can read user stories, feature specifications, or requirement documents and automatically generate comprehensive test cases, including edge cases a human tester might not think to include.

- Executing and Monitoring Tests: AI agents can run thousands of tests simultaneously across multiple environments and configurations, collecting results in real time and flagging anomalies without human oversight.

- Self-Healing Scripts: When a UI changes, say, a button moves or a field gets renamed traditional automation scripts break. AI agents detect these changes and update the test scripts automatically. No manual maintenance required.

- Predicting Failures: Agentic AI in testing doesn’t just react to bugs; it predicts them. By analysing code complexity, change history, and defect patterns, AI systems can highlight which areas of an application are most likely to fail in the next release cycle.

The key distinction between old-school automation and modern AI agents is autonomy. Traditional automation tools do what they’re told. AI agents figure out what needs to be done and sometimes decide how to do it differently than a human would have thought to.

How AI Agents Are Transforming the Software Testing Lifecycle?

Software testing isn’t a single activity; it’s a full lifecycle, from requirements to release. And AI agents are showing up at every stage.

- Requirements Analysis: AI tools scan requirements document to identify ambiguities, inconsistencies, or missing scenarios before a single line of code is written. This catches potential defects at the cheapest possible point before development even begins.

- Test Planning and Prioritisation: AI systems analyse historical defect data, code complexity, and release history to help teams decide which areas need the most testing coverage. Instead of spreading effort evenly, teams can focus resources where the actual risk lives.

- Test Case Design: AI agents generate test cases based on user stories and specifications using natural language understanding. Teams that used to spend days designing test suites can now review and refine AI-generated cases in hours.

- Test Execution: AI-driven testing frameworks run thousands of tests in parallel across browsers, operating systems, screen sizes, and API configurations simultaneously. What used to take a team of testers a week can now be completed overnight or in minutes.

- Defect Detection and Reporting: AI systems don’t just find bugs; they categorise them, estimate severity, group related issues together, and present findings in dashboards that are easy for both technical and non-technical stakeholders to understand.

- Regression Testing: Every time code changes, regression testing needs to happen. AI agents know which tests to re-run based on what changed, skipping irrelevant tests and focusing on the areas most affected. This dramatically reduces regression cycle time.

- Post-Release Monitoring: AI agents can monitor live applications for anomalies, performance degradation, and unexpected user behaviour, catching production issues faster than any manual monitoring process could.

The result is a testing lifecycle that is faster, smarter, and far more comprehensive than anything a purely manual approach could deliver at scale.

What Tasks Are AI Agents Actually Replacing?

When people talk about AI agents “replacing” manual software testing, what exactly are they talking about? Here’s a clear breakdown of the tasks where AI is doing the heavy lifting:

- Repetitive Regression Testing: Running the same test cases again after every code change was always the most time-consuming and mind-numbing part of a tester’s job. AI agents handle this completely, running regression suites automatically and only flagging genuine failures.

- Basic Functional Testing: Checking that buttons work, forms submit correctly, and standard user flows complete without errors is now handled by AI-driven test automation with greater speed and consistency than manual execution.

- Data Entry and Form Validation Testing: AI agents can generate thousands of test data combinations and run them through forms at scale, covering boundary values, negative inputs, special characters, and edge cases that manual testers would take days to cover.

- Visual Regression Testing: AI-powered visual testing tools can compare UI screenshots pixel by pixel across releases, instantly detecting unintended layout changes, broken images, or misaligned elements.

- Performance Testing: AI tools monitor response times, identify bottlenecks, and simulate high load conditions automatically, providing continuous performance insights without manual test execution.

- Test Report Generation: Compiling test results into readable reports used to take significant time. AI agents generate detailed, well-organised testing reports automatically, complete with trend analysis and risk summaries.

- Script Maintenance: Perhaps most significantly, AI self-healing capabilities mean that when UIs change, test scripts update themselves. This eliminates one of the highest costs of traditional automation: the constant maintenance burden.

AI agents are replacing tasks that are repetitive, high-volume, rule-based, and data-driven. Where things follow a pattern that can be learned, AI learns it and takes over.

Where Manual Testing Still Wins (And Always Will)

Here’s the nuance that gets lost in the hype: AI agents are not replacing testers. They’re replacing certain types of work that testers used to do. There’s a significant difference.

Several critical testing activities remain deeply human, and they’re not going away anytime soon:

- Exploratory Testing: When experienced testers freely explore an application without a script, following their curiosity and intuition, they uncover the bugs that nobody anticipated. AI agents work from patterns in existing data. True exploratory testing requires human creativity, and AI simply doesn’t have that yet.

- User Experience (UX) Testing: Does this app feel good to use? Is the navigation intuitive? Does the error message make sense to a real person? These are questions only humans can answer meaningfully. AI can measure load times and click counts, but it cannot judge how a product feels.

- Accessibility Testing: Ensuring that software works for users with disabilities requires human empathy and direct experience. While AI tools can flag some accessibility violations, the deeper evaluation of whether a product is truly inclusive requires human judgment.

- Domain-Specific and Compliance Testing: In regulated industries, healthcare, finance, aviation, and legal testers need to understand not just the software but the industry context. A tester in a healthcare application needs to know why a certain data handling issue could create a HIPAA violation, not just that data was handled differently than expected.

- Ethical and Bias Testing: As AI systems themselves become part of software products, testing whether those systems behave ethically, fairly, and without bias is a profoundly human responsibility. AI cannot objectively evaluate another AI for fairness, which requires human moral reasoning.

- Stakeholder Communication: Translating testing findings into business language, communicating risk to non-technical leadership, and advocating for quality decisions requires human intelligence, interpersonal skills, and organisational awareness.

Manual software testing services remain highly valuable not as a replacement for AI-driven approaches, but as the indispensable human layer that gives AI testing its context, direction, and meaning.

The Rise of Agentic AI: The Next Level of Test Automation

If regular AI in testing felt like a power-up for your QA team, agentic AI feels like an entirely different game.

Agentic AI refers to AI systems that operate with a higher degree of autonomy, setting their own sub-goals, making decisions across multi-step processes, and completing complex workflows without needing a human to direct each step. In testing, this means systems that don’t just run a test suite, but decide what to test, how to test it, when to run it, and what to do when they find a problem.

Here’s what makes agentic AI different from conventional AI-powered testing:

- Multi-Step Decision Making: A traditional AI tool runs tests based on triggers. An agentic AI system can assess a new feature, decide which areas of the application it might affect, generate appropriate test cases, execute them, analyse results, and file bug reports, all as a connected workflow without human intervention at each step.

- Context Awareness: Agentic AI systems maintain an understanding of the broader testing context, what was tested before, what has changed, what risk areas exist, and what the testing goals are and use that context to make smarter decisions throughout the process.

- Collaboration with Development: Advanced agentic AI systems can integrate directly with development workflows, flagging potential issues at the code review stage, suggesting test coverage improvements, and learning from developer feedback to improve future testing strategies.

- Continuous Learning: The more an agentic AI system tests a particular application or domain, the better it gets. It learns from every defect found, every false positive, and every release outcome, continually sharpening its testing strategy.

The implications for software quality are enormous. Teams that adopt agentic AI in their QA workflows aren’t just saving time; they’re achieving levels of test coverage and defect detection that simply weren’t possible with manual or traditional automated approaches.

Top AI-Powered Manual Software Testing Tools

The ecosystem of AI-driven testing tools has exploded. Here’s a look at some of the most widely adopted platforms transforming how teams approach software testing:

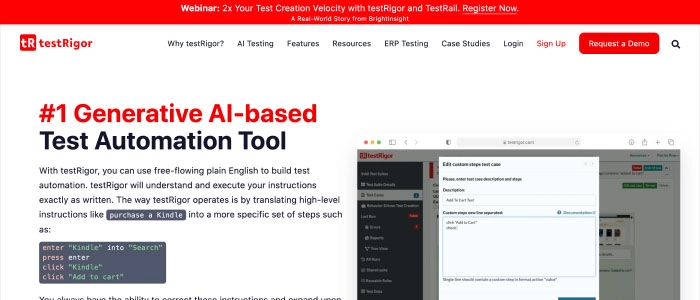

TestRigor

generative AI-based testing platform that lets testers write test cases in plain English, no coding required. testRigor is known for its extremely low maintenance overhead, with teams reporting less than 0.1% of time spent on test maintenance. It’s particularly powerful for teams that want to enable non-technical QA staff to contribute to automation.

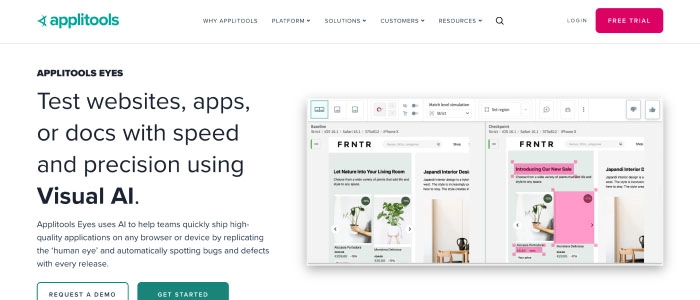

Applitools Eyes

Specialises in AI-powered visual testing. Applitools uses computer vision to compare UI screenshots intelligently, distinguishing meaningful visual regressions from irrelevant pixel-level differences. It integrates seamlessly with virtually every major test automation framework.

Mabl

A low-code, AI-native testing platform that creates, runs, and maintains tests automatically. Mabl’s auto-healing features ensure tests stay current as applications evolve, and its intelligent test generation reduces the effort of building comprehensive test suites.

Testim

An AI-powered testing tool that uses machine learning to identify stable UI elements and create more resilient automated tests. Testim is particularly well-regarded for its ability to reduce test flakiness, a major pain point in traditional automation.

Functionize

Combines NLP and ML to enable teams to write tests in natural language and have them automatically translated into working test scripts. Functionize is also notable for its intelligent root cause analysis when tests fail.

Selenium with AI Extensions

The classic web automation framework is increasingly being augmented with AI-powered plugins and add-ons that add capabilities like self-healing selectors, visual comparison, and smarter element identification, giving familiar tooling a modern boost.

Tricentis Tosca

An enterprise-grade, model-based testing solution that uses AI to optimise test case selection, reduce redundancy, and ensure that the most critical paths are always covered.

The common thread across all these tools: they reduce the burden of script writing, script maintenance, and test case generation, the tasks that consume the most time in traditional manual and automated testing workflows.

Will AI Completely Replace Manual Software Testers?

When calculators became widespread, they didn’t eliminate mathematicians. They eliminated the tedious arithmetic so mathematicians could focus on genuinely hard mathematical problems. The same dynamic is playing out in QA.

AI agents are taking over the parts of testing that were always too mechanical for the level of skill most testers bring to the table. What remains and what grows in importance is the work that requires uniquely human capabilities: creative problem-solving, user empathy, ethical judgment, business context, and strategic thinking.

In fact, new roles are emerging in QA that didn’t exist five years ago:

- AI Quality Engineer: Testers who specialise in validating AI-powered features, checking for model bias, hallucination, output consistency, and alignment with user intent. This role requires understanding both software testing principles and machine learning concepts.

- Quality Strategist: A senior QA professional who focuses on defining quality standards across an organisation, communicating risk to business leadership, and ensuring that AI testing tools are aligned with actual product goals.

- Prompt Engineer for Testing: Professionals who write the prompts and instructions that guide AI testing tools, ensuring that AI-generated test cases are comprehensive, relevant, and aligned with real user scenarios.

- Vibe Tester: An emerging role focused on evaluating the “feel” of AI-powered products, assessing whether an AI assistant communicates appropriately, maintains the right tone, and aligns with brand values. Entirely human, entirely irreplaceable.

The future of QA is a partnership between human insight and machine capability. The most valuable testers going forward won’t be those who can click the fastest or write the most scripts. They’ll be the ones who can guide AI systems effectively, interpret their outputs critically, and advocate for quality in ways that machines simply cannot.

How Businesses Are Benefiting From AI-Driven QA Today?

The business case for AI in software testing is no longer theoretical. Companies across industries are seeing real, measurable results from integrating AI agents into their testing workflows.

- Faster Release Cycles: Organisations using AI-powered testing report significant reductions in QA cycle time. Test suites that took days to run manually are now completed in hours, sometimes minutes. This directly accelerates product delivery and time-to-market.

- Higher Test Coverage: AI agents can generate and execute far more test scenarios than a human team could manage manually. Teams regularly report 40–70% improvements in test coverage after adopting AI-driven testing approaches.

- Reduced Defect Leakage: When more tests are run and run faster, fewer bugs slip into production. AI-driven testing catches defects earlier in the development cycle, where fixing them is cheaper and less disruptive.

- Lower QA Costs Over Time: While AI testing tools require upfront investment, the reduction in manual testing hours, script maintenance time, and production incident costs typically results in significant cost savings within the first year of adoption.

- Better Team Morale: When QA engineers are freed from tedious regression execution and script babysitting, they can focus on the creative, analytical, and collaborative work they actually find fulfilling. Teams that adopt AI testing tools often report higher job satisfaction.

- Scalability Without Headcount: As applications grow more complex, AI testing scales with them without requiring proportional increases in QA team size. This is particularly valuable for fast-growing startups and enterprise organisations expanding into new markets.

Businesses investing in professional manual software testing services augmented by AI are finding that they can do more, release faster, and maintain higher quality simultaneously a combination that was genuinely difficult to achieve in a purely manual testing paradigm.

How To Prepare Your QA Team For the AI Era?

If you’re leading a QA team, or you’re a tester thinking about your career trajectory, the most important question isn’t whether AI is changing testing. It clearly is. The important question is: what do you do about it?

Here’s how smart QA professionals and teams are positioning themselves for success:

- Embrace AI Tools Early: The best way to stay relevant is to develop hands-on experience with AI testing platforms. Teams that experiment with testRigor, Mabl, Applitools, or similar tools today are building institutional knowledge that will be enormously valuable over the next five years.

- Shift from Execution to Strategy: As AI handles more test execution, human testers should invest in the strategic dimensions of QA: requirements analysis, risk assessment, quality advocacy, and stakeholder communication. These skills become more valuable as AI takes over the mechanical work.

- Learn the Language of AI: You don’t need to become a machine learning engineer. But understanding the basics of how AI models learn, what training data means, and how bias enters AI systems makes you a far more effective collaborator with AI tools and a much stronger advocate for quality in AI-powered products.

- Strengthen Exploratory Testing Skills: Since exploratory testing remains deeply human, investing in becoming a better exploratory tester is a direct investment in your long-term career value. Techniques like session-based test management, charter-based exploration, and risk-based thinking are increasingly important skills.

- Develop Domain Expertise: Deep knowledge of a specific industry, such as healthcare, fintech, e-commerce, and enterprise software, makes you a testing professional that AI cannot replace. Domain expertise is the context that gives AI testing its meaning and direction.

- Communicate Quality as a Business Value: The ability to translate testing outcomes into business language, explaining risk, cost of defects, and quality trade-offs to non-technical stakeholders is a skill AI cannot replicate. Developing this skill makes you indispensable to any organisation’s QA leadership.

The teams and professionals that will thrive in the AI era of testing are those who treat AI as a powerful collaborator, not a threat to avoid or a magic solution to depend on blindly. Getting that balance right is both the challenge and the opportunity of this moment.

Conclusion

The era of AI agents in software testing is not a threat to the profession; it’s a recalibration. The parts of manual software testing that were always too mechanical for the talent of the people doing them are being handed to machines. What’s left for humans is richer, more strategic, more creative, and arguably more important than ever.

The testers who will struggle in this transition are those who define their professional value by the tasks AI is now handling: the regression clicks, the script maintenance, and the test report generation.

The testers who will thrive are those who define their value by what AI cannot replicate: their understanding of users, their judgment about risk, their creativity in exploration, and their ability to advocate for quality as a business imperative.

Software quality has always mattered. It matters even more in a world where software is deployed continuously, used by millions, and increasingly powered by AI systems that carry their own quality challenges.

At Sphinx Solutions, we believe that the most powerful QA approach combines advanced AI-driven capabilities with deep human expertise. Our manual software testing services are built for the modern software landscape, integrating intelligent automation where it adds speed and scale, while preserving the human judgment that ensures quality means something real.

Whether you’re looking to augment your existing QA practice with AI tools, build a comprehensive testing strategy from the ground up, or simply understand what world-class software quality looks like today, we’re here for that conversation.

FAQ’s:

- Will AI completely replace manual software testers?

No. AI agents are replacing specific tasks within manual testing particularly repetitive, high-volume, rule-based work like regression testing, script execution, and basic functional checks. But human testers remain essential for exploratory testing, UX evaluation, domain-specific judgment, ethical assessment, and strategic QA leadership. The role is evolving, not disappearing.

- What is agentic AI in software testing?

Agentic AI refers to AI systems that operate with a high degree of autonomy, setting their own testing objectives, making multi-step decisions, generating test cases, executing them, and responding to results, all without constant human direction. Unlike traditional automation tools that follow fixed scripts, agentic AI systems adapt their approach based on context, data, and outcomes.

- What are the best AI-powered testing tools available today?

Leading AI testing platforms include testRigor (plain-English test automation), Applitools (visual regression testing), Mabl (AI-native low-code testing), Testim (ML-powered test resilience), Functionize (NLP-based test creation), and Tricentis Tosca (enterprise model-based testing). The best choice depends on your team’s size, technical skills, and specific testing needs.

- Is manual testing in software testing still relevant?

Absolutely. Manual testing remains critical for use cases that require human judgment, exploratory testing, UX evaluation, accessibility testing, compliance verification in regulated industries, and ethical testing of AI-powered features. The most effective QA strategies combine AI-driven automation with targeted manual testing efforts.

- How can I transition my QA career to work with AI agents?

Start by gaining hands-on experience with AI testing tools, even if just in personal or side projects. Develop expertise in the areas that AI cannot replace, exploratory testing, domain knowledge, communication skills, and strategic thinking. Learn enough about AI and ML to be an intelligent collaborator and stay curious about how the tools continue to evolve.

- What are the main benefits of using AI agents in software testing?

The key benefits include dramatically faster test execution, higher test coverage without proportional increase in team size, earlier defect detection, reduced maintenance burden through self-healing scripts, more consistent test results, and the ability for human testers to focus on high-value, creative testing work rather than repetitive execution.

+1 646 500 3924

+1 646 500 3924 +91-20-29701664

+91-20-29701664